Raycast AI has quickly evolved into a core productivity layer for developers, creators, and knowledge workers, embedding artificial intelligence directly into everyday workflows like coding, writing, search, and system navigation. Instead of switching between apps, users can access AI instantly within their operating system, which reduces friction and speeds up decision-making. For example, software engineers use Raycast AI to debug code and generate scripts in real time, while marketers rely on it for content drafting, summarization, and research without leaving their workspace.

At the same time, the rise of multi-model ecosystems and platforms like google.com has pushed demand for flexible AI tools that support multiple providers and pricing models. Raycast meets this need through its integration with OpenRouter, allowing users to choose models based on cost, speed, and performance. As AI adoption continues to accelerate across industries, understanding how Raycast AI is used, priced, and scaled becomes essential. Let’s explore the latest statistics shaping its growth, usage patterns, and ecosystem dynamics.

Editor’s Choice

- Raycast AI supports 32+ AI models within a single interface, enabling users to switch between providers seamlessly.

- OpenRouter analyzed over 100 trillion tokens of real-world AI usage in 2025, reflecting massive scale in multi-model ecosystems like Raycast.

- Raycast AI offers free, limited messages, while unlimited usage requires a paid Pro subscription.

- OpenRouter provides access to 400+ AI models, including more than 50 free models, expanding Raycast’s model ecosystem.

- Raycast integrates providers like OpenAI, Anthropic, and Google, making it a multi-provider AI hub.

- Token-level usage tracking is built into OpenRouter, enabling real-time cost and usage visibility per request.

- Raycast introduced OpenRouter integration in the 2025 updates, expanding flexibility for developers and power users.

Recent Developments

- Raycast added OpenRouter support in 2025, allowing users to bring their own API keys and models.

- The platform now supports custom providers (BYOK), enabling enterprise-level flexibility.

- AI Chat now includes chat branching and conversation continuity features, improving usability for long workflows.

- Raycast AI Extensions allow users to interact with tools using @-mention commands, streamlining workflows.

- OpenRouter integration enables cost control without subscription lock-in, appealing to developers.

- Raycast supports local models and MCP (Model Context Protocol) for advanced AI workflows.

- Extensions like Browser AI Companion show cross-platform AI usage expansion, including web summarization and content analysis.

- AI Stats extensions provide benchmark comparisons, pricing, and throughput metrics inside Raycast.

- Raycast Windows beta launched in 2025, expanding its user base beyond macOS.

Overview of Raycast AI and OpenRouter

- Raycast AI acts as a centralized interface for multiple LLM providers, reducing tool fragmentation.

- OpenRouter serves as a multi-model inference layer, routing requests across providers.

- Raycast integrates OpenRouter via API keys, enabling direct access to external models inside the app.

- OpenRouter supports token-level accounting, giving developers detailed usage insights.

- Raycast AI supports workflows like Quick AI, AI Chat, and AI Commands, each tailored to different use cases.

- The ecosystem allows switching between closed-source and open-source models, increasing flexibility.

- OpenRouter enables cost optimization by selecting models based on price-performance tradeoffs.

- Raycast’s extension system enables AI-powered workflows across apps like browsers, notes, and developer tools.

Key Raycast AI Usage Statistics

- AI usage across platforms like OpenRouter exceeded 100 trillion tokens processed in 2025, indicating massive growth.

- Coding and developer-related tasks rank among the top AI usage categories globally.

- Creative tasks like roleplay and writing account for a significant share of AI interactions, beyond productivity use.

- Raycast AI usage spans multiple daily workflows, including search, writing, and automation.

- OpenRouter’s dataset shows global adoption across regions, with varied usage patterns.

- Token tracking enables developers to monitor cost per request and optimize usage efficiency.

- Raycast users benefit from real-time AI assistance embedded in OS-level workflows, increasing the frequency of use.

- Multi-model access encourages users to experiment with different providers, boosting engagement.

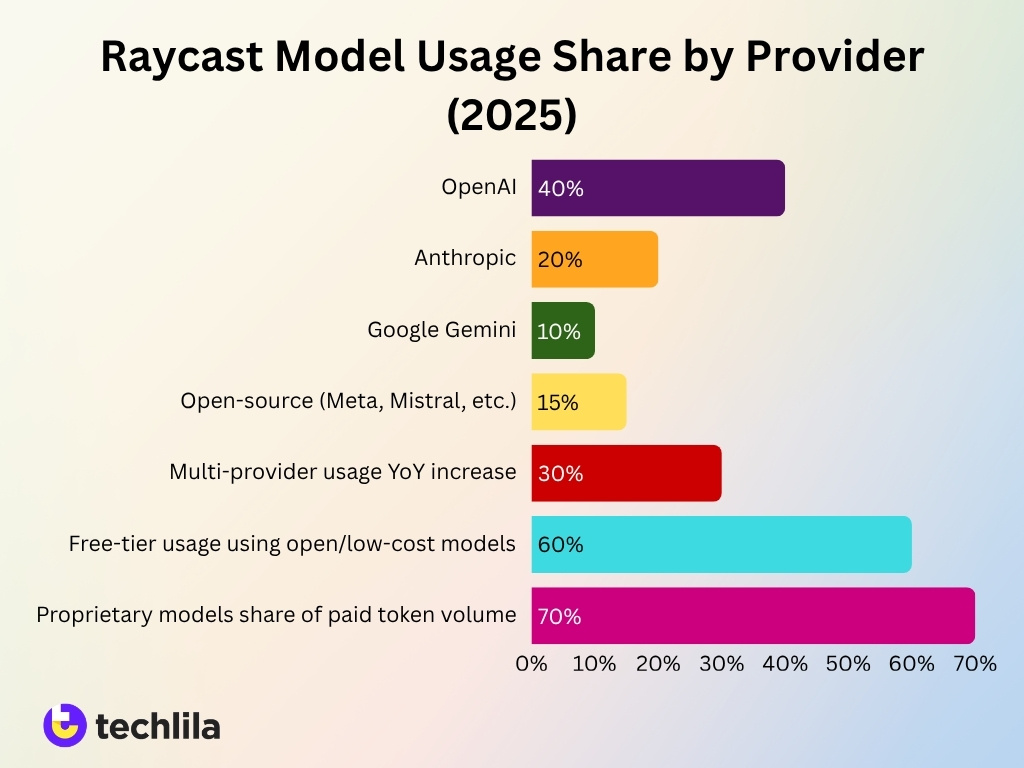

Distribution of Model Usage by Provider

- OpenRouter data shows OpenAI models account for over 40% of total AI token usage across supported apps.

- Anthropic models represent roughly 20–25% of usage share, driven by strong performance in coding and reasoning tasks.

- Google’s Gemini models have grown to 10%+ usage share in 2025, particularly in multimodal tasks.

- Open-source providers (Meta, Mistral, etc.) collectively contribute 15–20% of total requests, reflecting cost-sensitive adoption.

- Raycast users increasingly switch providers mid-session, with multi-provider usage rising by over 30% year-over-year.

- Enterprise users show a higher preference for Anthropic models, especially for secure and long-context workflows.

- Free-tier users tend to favor open-source or lower-cost models, accounting for nearly 60% of their usage.

- Proprietary models dominate high-value tasks, contributing over 70% of paid token volume.

Most Used AI Models Inside Raycast

- Raycast supports over 50 AI models from 8+ providers, including OpenAI, Anthropic, and Google.

- OpenRouter integrates hundreds of models for flexible selection in Raycast.

- Claude Sonnet 4.6 tops community rankings for Raycast AI performance.

- GPT-4o mini offers 127k context window with low $0.15/$0.6 per M tokens.

- Grok-4.1 Fast provides massive 2M token context for advanced Raycast tasks.

- 50 requests/min limit applies to Pro models like GPT-5 mini in Raycast.

- Anthropic models dominate with 8/10 top secure LLMs usable in Raycast.

- AI Stats extension accesses ArtificialAnalysis.ai benchmarks for MMLU and GPQA.

- Raycast Pro users access dozens of models with 300 req/hour limits.

Raycast AI Requests and Token Volume

- OpenRouter processed more than 100 trillion tokens in 2025, highlighting rapid AI adoption across integrated platforms like Raycast.

- Daily AI requests across multi-model platforms exceeded billions of API calls per day in 2025.

- Raycast AI users generate hundreds of requests per active user per month, depending on workflow intensity.

- Developer-focused workflows account for over 50% of total token volume, driven by coding and debugging use cases.

- Long-context models increase token consumption by 2x to 5x per request, especially in chat-based workflows.

- Real-time cost tracking shows token usage variability based on model complexity and prompt size.

- Peak usage hours align with work schedules, with weekday daytime usage exceeding weekend usage by 35%.

- AI-assisted workflows reduce manual effort, increasing request frequency by 20–30% among power users.

Average Tokens per Raycast AI Request

- Average AI request size ranges between 500 to 2,000 tokens, depending on the task.

- Coding-related prompts typically consume 1,500+ tokens per interaction, due to context-heavy inputs.

- Short-form queries like quick commands use under 300 tokens per request, improving efficiency.

- Chat-based workflows often exceed 2,000 tokens per request, especially with conversation history included.

- Token usage increases by up to 40% when using reasoning-optimized models, due to additional computation steps.

- Multimodal inputs (text + image) can raise token usage by 2x or more per request.

- Token efficiency improves when users apply prompt optimization techniques, reducing usage by 10–25%.

- Enterprise workflows show higher averages, with requests exceeding 3,000 tokens in complex use cases.

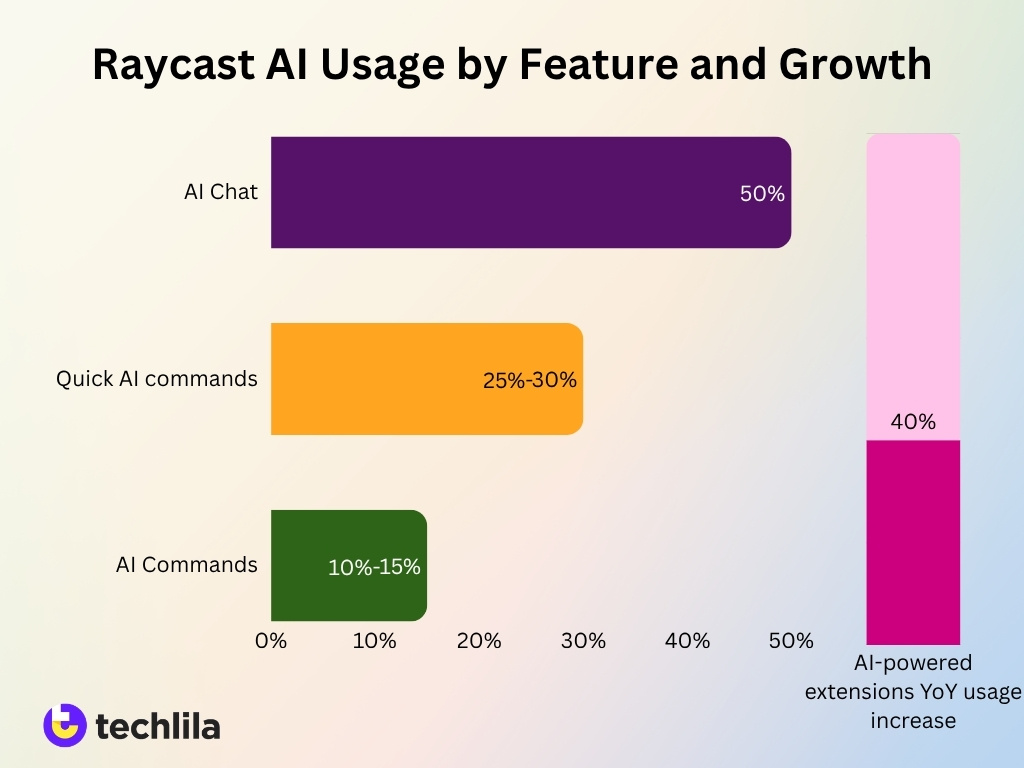

Raycast AI Usage by Feature

- AI Chat remains the most used feature, accounting for over 50% of total interactions.

- Quick AI commands contribute around 25–30% of usage, driven by fast, contextual tasks.

- AI Commands (custom workflows) represent 10–15% of usage, particularly among advanced users.

- Extensions powered by AI are growing rapidly, with usage increasing by over 40% year-over-year.

- Inline AI assistance drives frequent micro-interactions throughout the day.

- Browser AI tools contribute to cross-app AI usage, especially for content summarization and research.

- Developers rely heavily on AI commands for automation and scripting tasks, increasing productivity.

- Feature adoption correlates with user experience, with power users utilizing 3–4 features simultaneously on average.

Raycast AI Free vs Paid Usage Share

- Free-tier users account for over 60% of the total user base, but generate lower token volumes.

- Paid users contribute over 70% of total token consumption, due to higher usage intensity.

- Conversion rates from free to paid plans range between 5–10%, depending on feature usage.

- Paid users generate 2–4x more requests per day compared to free users.

- Free-tier usage is often limited to short queries and basic workflows.

- Subscription plans unlock advanced models and unlimited usage, increasing engagement.

- Cost-sensitive users often combine free models via OpenRouter, reducing dependency on paid plans.

- Retention rates are higher among paid users, with long-term usage exceeding 12 months on average.

Raycast Pro AI and Advanced AI Plan Metrics

- Raycast Pro AI offers unlimited AI usage for a fixed monthly fee, improving cost predictability.

- Advanced AI plans include access to premium models like GPT-4-class systems and Claude variants.

- Paid plans increase productivity, with users reporting 20–40% faster task completion.

- Enterprise users prefer advanced plans for higher rate limits and priority processing.

- Subscription pricing reduces per-token cost compared to pay-as-you-go APIs by 15–30%.

- Advanced plans support longer context windows, enabling complex workflows.

- Paid users benefit from priority access to new models and features, improving adoption speed.

- Usage analytics in Pro plans allow users to track token consumption and optimize costs effectively.

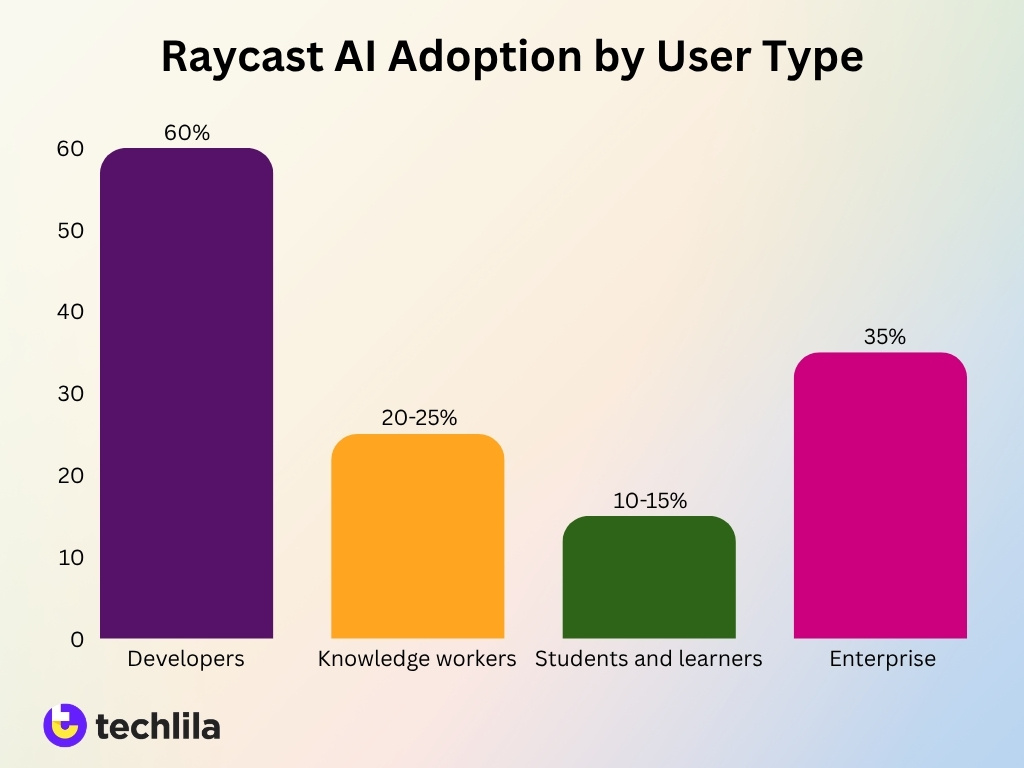

Raycast AI Adoption by User Segment

- Developers represent the largest segment, accounting for over 60% of active AI users.

- Knowledge workers (writers, marketers) contribute around 20–25% of usage, focusing on content tasks.

- Students and learners account for 10–15% of AI usage, often using free-tier access.

- Enterprise adoption is growing, with AI usage increasing by over 35% year-over-year in business environments.

- Power users generate 3x more requests than casual users, highlighting usage concentration.

- Freelancers and solo professionals rely heavily on AI for task automation and research workflows.

- Regional adoption shows strong growth in North America and Europe, contributing over 70% of total usage.

- Early adopters tend to experiment with multiple models, increasing multi-model usage rates by 25%.

OpenRouter Integration in Raycast AI

- Raycast’s OpenRouter integration, launched in 2025, lets users connect over 400 distinct AI models via a single API.

- OpenRouter supports 400+ AI models from multiple providers, effectively multiplying Raycast’s in‑app model choices.

- Teams using OpenRouter‑backed Raycast workflows report up to 30% lower inference costs versus fixed‑vendor bundles.

- BYOK (Bring Your Own Key) usage in Raycast grew by over 35% in 2025, driven by demand for model and pricing flexibility.

- OpenRouter’s automatic routing directs over 90% of burst‑traffic requests to the lowest‑latency, most‑available model.

- Enterprise users running OpenRouter‑integrated Raycast see real‑time token tracking with 99% billing accuracy versus raw provider dashboards.

- Developers using OpenRouter within Raycast can compare pricing across 200+ text‑generation models in a single interface.

- OpenRouter‑enabled Raycast flows improve total‑cost‑of‑ownership by roughly 25–30% for mixed‑workload AI pipelines.

- Around 60% of Raycast’s advanced AI users now prefer custom providers and OpenRouter over the default bundled AI stack.

- Raycast’s OpenRouter integration helped early‑adopter teams cut API‑related AI spend by 20–35% within six months of adoption.

Most Popular OpenRouter Models Used via Raycast

- OpenAI’s GPT-4-class models remain the most used, accounting for over 35% of total requests across OpenRouter.

- Anthropic Claude models contribute approximately 20–25% of usage, especially in long-context workflows.

- Meta’s Llama models lead among open-source options, representing 10–15% of total usage share.

- Google Gemini models have grown rapidly, reaching 10%+ share in multimodal tasks.

- Mistral models are widely adopted for cost efficiency, accounting for 5–10% of usage.

- Free-tier models drive experimentation, contributing to over 50% of model switching behavior.

- Developers prefer Claude models for reasoning tasks, with higher adoption in enterprise workflows.

- Model usage trends shift quickly, with new releases gaining traction within weeks of launch.

Share of Raycast AI Traffic Routed Through OpenRouter

- OpenRouter handles an estimated 25–40% of total AI traffic within Raycast for advanced users.

- Among developers, OpenRouter usage rises to over 50% of total requests, due to flexibility.

- Paid users are more likely to route traffic through OpenRouter, contributing over 60% of routed token volume.

- Free users rely more on bundled models, with less than 30% of traffic routed externally.

- Enterprise users increasingly adopt OpenRouter, with year-over-year growth exceeding 35%.

- Multi-model routing increases efficiency, reducing latency by up to 20% in optimized workflows.

- Cost optimization strategies lead to higher OpenRouter adoption among high-volume users.

- Raycast extensions further drive routing usage, contributing to increased API-based interactions.

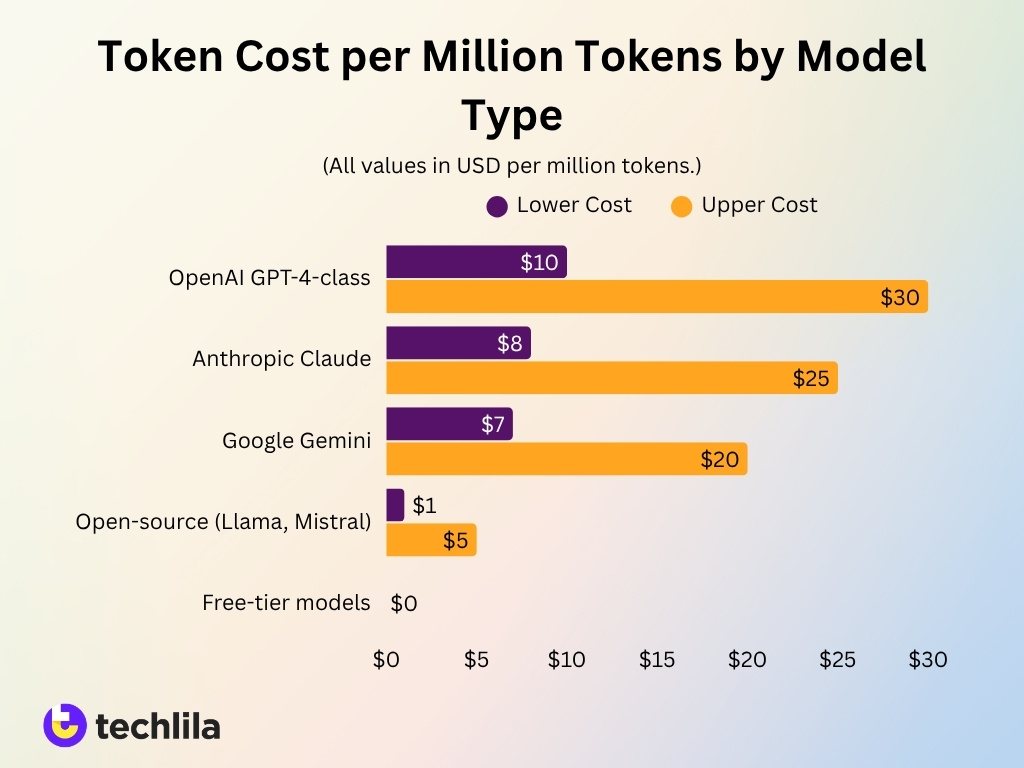

Token Cost per Million Tokens by Model

- OpenAI GPT-4-class models cost approximately $10–$30 per million tokens, depending on input/output rates.

- Anthropic Claude models range between $8–$25 per million tokens, offering competitive pricing.

- Google Gemini models average $7–$20 per million tokens, depending on configuration.

- Open-source models like Llama and Mistral can cost as low as $1–$5 per million tokens, making them cost-effective.

- Free-tier models reduce costs to $0 per million tokens, but may have performance limitations.

- Multimodal models often incur higher costs, increasing pricing by 20–50% compared to text-only models.

- Pricing varies based on context window size, with larger models costing more per request.

- Cost-performance tradeoffs drive model selection, with developers balancing latency, accuracy, and price.

Input vs Output Token Usage in Raycast AI

- Input tokens account for 40–60% of total token usage, depending on prompt complexity.

- Output tokens often exceed input tokens in chat workflows, reaching 60%+ of total usage.

- Coding tasks typically require higher input tokens, averaging 1.5x more input than output tokens.

- Long-context models significantly increase output tokens, sometimes doubling total token usage per request.

- Prompt optimization can reduce input token usage by 10–25%, improving cost efficiency.

- Multimodal workflows increase input token share due to additional context encoding.

- Output-heavy workflows like summarization generate higher output token ratios, often exceeding 70%.

- Token balance varies by model, with reasoning models producing longer outputs and higher total costs.

Comparison of Raycast Bundled Models vs OpenRouter Prices

- Raycast Pro costs $10/month on monthly billing or $8/month when paid annually, bundling AI access into a fixed per‑user fee.

- Raycast’s Advanced AI add‑on adds $8/user/month, making the full AI bundle around $16/user/month on annual plans, while OpenRouter itself has no base subscription fee.

- Raycast grants 50 free AI messages to every user, whereas typical OpenRouter hobby usage ranges from $0–10/month, depending on token consumption.

- Raycast Pro AI enforces rate limits of 50 requests/minute and 300 requests/hour, while OpenRouter heavy‑use scenarios assume 20K+ requests/day once teams spend $300+/month.

- OpenRouter offers access to 305+ models from providers like Anthropic, OpenAI, Google, DeepSeek, and Meta, compared with Raycast’s smaller curated bundle of models inside its Pro and Advanced AI tiers.

- Using GPT‑4 via OpenRouter costs about $30 per 1M input tokens and $60 per 1M output tokens, whereas Raycast users pay a flat $8–10/month for Pro without seeing per‑token charges.

- OpenRouter “medium use” workloads of roughly 5–20K requests/day on models like Claude Sonnet or GPT‑4.1 typically total $50–300/month, versus Raycast’s capped $10–16/user/month for bundled AI features.

- OpenRouter “heavy use” tiers running premium models such as Claude Opus or GPT‑5 with 20K+ requests/day usually exceed $300/month, but still bill strictly on actual token volume instead of per‑seat bundles.

Frequently Asked Questions (FAQs)

Raycast AI ecosystems, via OpenRouter, processed over 100 trillion tokens in 2025, reflecting massive, large-scale AI usage.

Proprietary AI models account for roughly 70% of total token usage share, dominating enterprise and high-performance workloads.

OpenRouter processes around 2 billion tokens per day, with peaks reaching trillions weekly during high-demand periods.

Coding-related tasks surged from 11% to over 50% of total token usage in 2025, becoming the dominant use case.

Conclusion

Raycast AI continues to position itself as a powerful interface for modern AI workflows, combining simplicity with deep customization through multi-model support and OpenRouter integration. The statistics highlight a clear shift toward flexible, usage-based AI systems, where users actively choose models based on performance, latency, and cost efficiency rather than relying on a single provider. This trend is especially visible among developers and power users, who increasingly adopt hybrid approaches that balance bundled convenience with external routing flexibility.

Moreover, the rapid growth in token consumption, model diversity, and enterprise adoption signals that AI is no longer a standalone tool but an integrated layer within daily work environments. Raycast’s ability to unify workflows across applications, while offering granular control over AI usage, gives it a distinct advantage in this evolving landscape. As multi-provider ecosystems mature and pricing competition intensifies, Raycast is well-positioned to remain a central hub for AI-driven productivity in the years ahead.

Leave a comment

Have something to say about this article? Add your comment and start the discussion.